Multi-Agent Orchestration Patterns: Architecting the "Swarm" Enterprise

Architecture guide for coordinating autonomous agent swarms. We cover the Master-Agent delegation pattern, the Model Context Protocol (MCP), and how to debug non-deterministic distributed systems.

Summary: A single agent is a chatbot. Multiple agents are an organization. The challenge of 2026 isn’t building a smart agent; it’s getting five of them to agree on a plan without getting stuck in an infinite loop. This guide details the Master-Agent Delegation pattern and the emerging Model Context Protocol (MCP) standard.

1) Executive Summary

As enterprises move from “Chat” to “Work,” we have hit the limits of the single-model paradigm. You cannot ask one LLM to “Write code, check compliance, debug errors, and deploy to Kubernetes” in a single prompt context—it will hallucinate or get lazy. The solution is Multi-Agent Orchestration: breaking the workflow into specialized personas (Coder, Reviewer, DevOps) that collaborate. Real-world implementations like Robylon and Amazon Bedrock Agents illustrate that success depends on rigid communication protocols and “Supervisor” nodes that prevent agentic drift[1].

2) The Core Pattern: Master-Worker Delegation

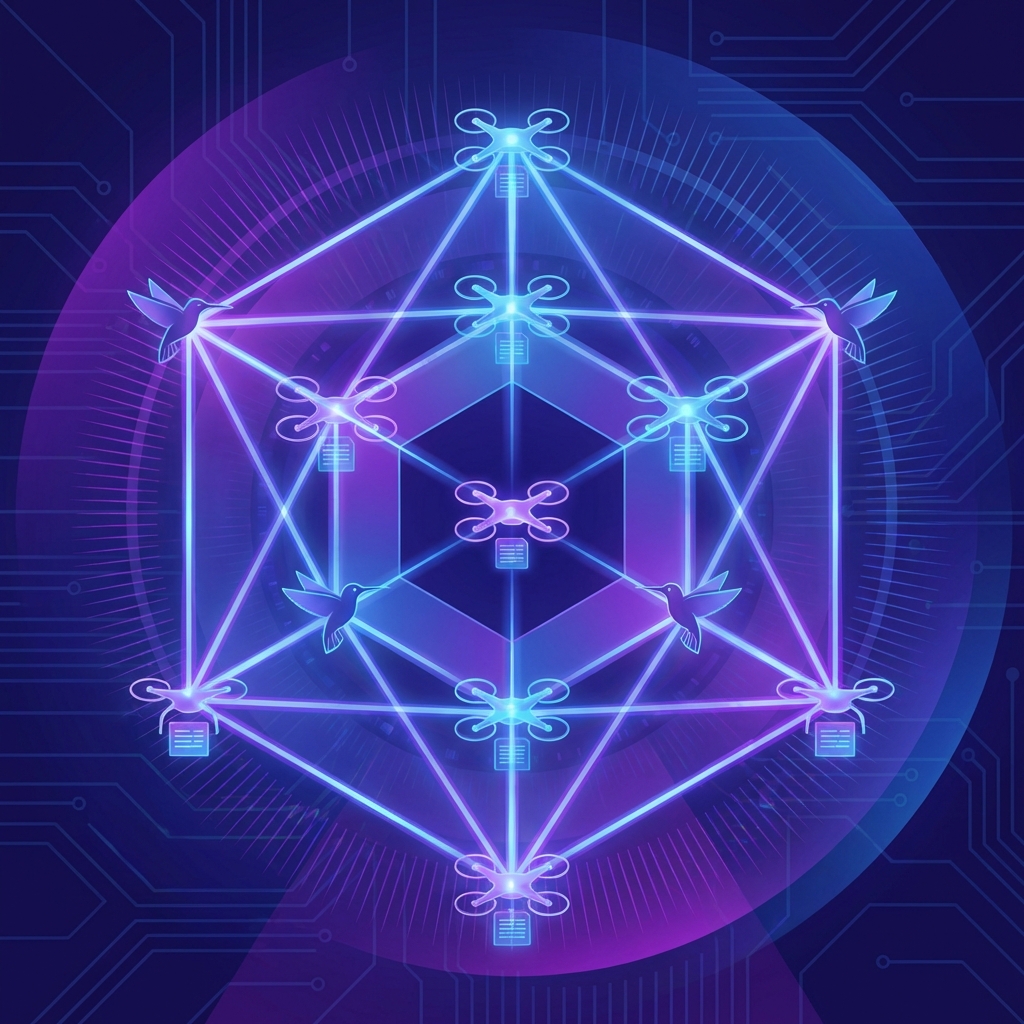

The “Flat Chat” (where every agent talks to every other agent) scales poorly (N^2 connections). Production systems use a Hierarchical Hub-and-Spoke topology.

The Roles

- The User Proxy: Interfaces with the human. Clarifies intent.

- The Master Orchestrator (Supervisor): Does not “do” work. It plans. It receives the goal, breaks it into steps, and routes tasks to workers. Critical: It holds the “State” of the project.

- Specialist Workers: Narrowly scoped agents.

- Graph Agent: “I only generate Plotly charts.”

- SQL Agent: “I only write read-only SELECT queries.”

3) Communication Protocol: The Rise of MCP

In 2024, agents used custom JSON schemas to talk. It was a mess. In 2026, the Model Context Protocol (MCP) is the USB-C of agents.

- Standardization: Defines how an agent exposes its “Tools” (Resources) and “Prompts” (Capabilities) to the Master.

- Interoperability: A Salesforce Agent can call a Github Agent without custom glue code because they both speak MCP.

4) Code Example: Building a Graph with LangGraph

We use LangGraph (or similar state-machine frameworks) to enforce the flow.

# Pseudo-code for a Research-Critique Loop

from langgraph.graph import StateGraph, END

# Define the "State" passed between agents

class AgentState(TypedDict):

draft: str

critique: str

revision_count: int

def researcher(state):

# Generates initial content

return {"draft": llm.invoke("Write about X...")}

def critic(state):

# Reviews content for errors

return {"critique": llm.invoke(f"Critique this: {state['draft']}")}

# Define the Workflow (The "Orchestration")

workflow = StateGraph(AgentState)

workflow.add_node("researcher", researcher)

workflow.add_node("critic", critic)

# Cylic connection: Research -> Critic -> Research

workflow.add_edge("researcher", "critic")

workflow.add_conditional_edges(

"critic",

should_continue, # If critique is clean, go to END. Else, back to Research.

{

"continue": "researcher",

"stop": END

}

)

5) Observability: Debugging the Swarm

Debugging a loop where Agent A lies to Agent B is a nightmare.

- Distributed Tracing: Every “thought” and “tool call” must be a span in a trace (OpenTelemetry).

- The “VCR” Pattern: You must record the inputs/outputs of every step. If the swarm fails, you should be able to “Replay” the execution up to step 4, then step in manually.

6) Case Study: Automated Code Migration

A fintech company used a swarm to migrate 50,000 lines of COBOL to Java[2].

- Agent 1 (Decompiler): Analyzes COBOL logic.

- Agent 2 (Architect): Maps it to Java patterns (Spring Boot).

- Agent 3 (Tester): Writes a unit test for the COBOL, then runs it against the Java.

- Outcome: 94% automated conversion. The 6% failure rate was handled by a “Human-in-the-Loop” queue where the Master Agent escalated ambiguous logic.

7) Challenges: The “Infinite Loop”

Agents maximize for “Task Completion.” If Agent A needs a file from Agent B, and Agent B says “I need permission from A,” they deadlock.

- Solution: Time-to-Live (TTL) on workflows. If a task isn’t done in 10 steps, the Master Agent kills the thread and throws an exception to a human.

8) Key Takeaways

- Define Boundaries: Agents fail when their scope is too broad. “Make me a website” fails. “Write the HTML for the navbar” succeeds.

- State is Sacred: Never let agents keep state in their context window. State belongs in a database (simulated memory).

- Human Gating: Always put a human approval step before any “Write” action to a production database.

[1] Instaclustr, “Agentic AI Frameworks: Top 8 Options in 2026,” Jan 2026.

[2] Acuvate, “Multi-Agent Orchestration Case Studies,” 2026.

[3] Microsoft, “Autogen: Enabling Next-Gen LLM Applications,” 2025.

[4] Anthropic, “Building Effective Agents,” Dec 2025.

Related Articles

Agentic AI Revolution in Enterprise: Beyond the Hype to Autonomous Operations

Agentic AI systems have captured 40% of enterprise software spending in 2026. This analysis examines the technical architecture, ROI from Danfoss and JPMorgan, and the governance frameworks enabling true autonomy.

Edge AI Deployment Strategies 2026: Bringing Frontier Models to Issues

A technical guide to deploying LLMs on smartphones and IoT devices. We cover model distillation, 4-bit quantization, and the Apple Neural Engine vs Snapdragon NPU landscape.

AI Cybersecurity Evolution 2026: The Era of Autonomous Defense

Analyzing the shift from reactive security to autonomous threat detection. We examine the 82:1 AI agent-to-human ratio, the rise of "Deepfake Identity Attacks," and the zero-trust architectures required to survive.