Quantum-AI Convergence: Real-World Hybrid Applications in 2026

Moving beyond theoretical supremacy: How hybrid quantum-classical workflows are delivering value today in finance and logistics. We analyze IonQ, Xanadu, and the timeline to fault tolerance.

Summary: The “Quantum Winter” is thawing, not because of a sudden breakthrough in error correction, but because of Hybrid Approaches. By offloading specific sub-routines (like optimization) to noisy quantum processors while keeping the rest on classical GPUs, enterprises are finally seeing “Quantum Utility” in production.

1) Executive Summary

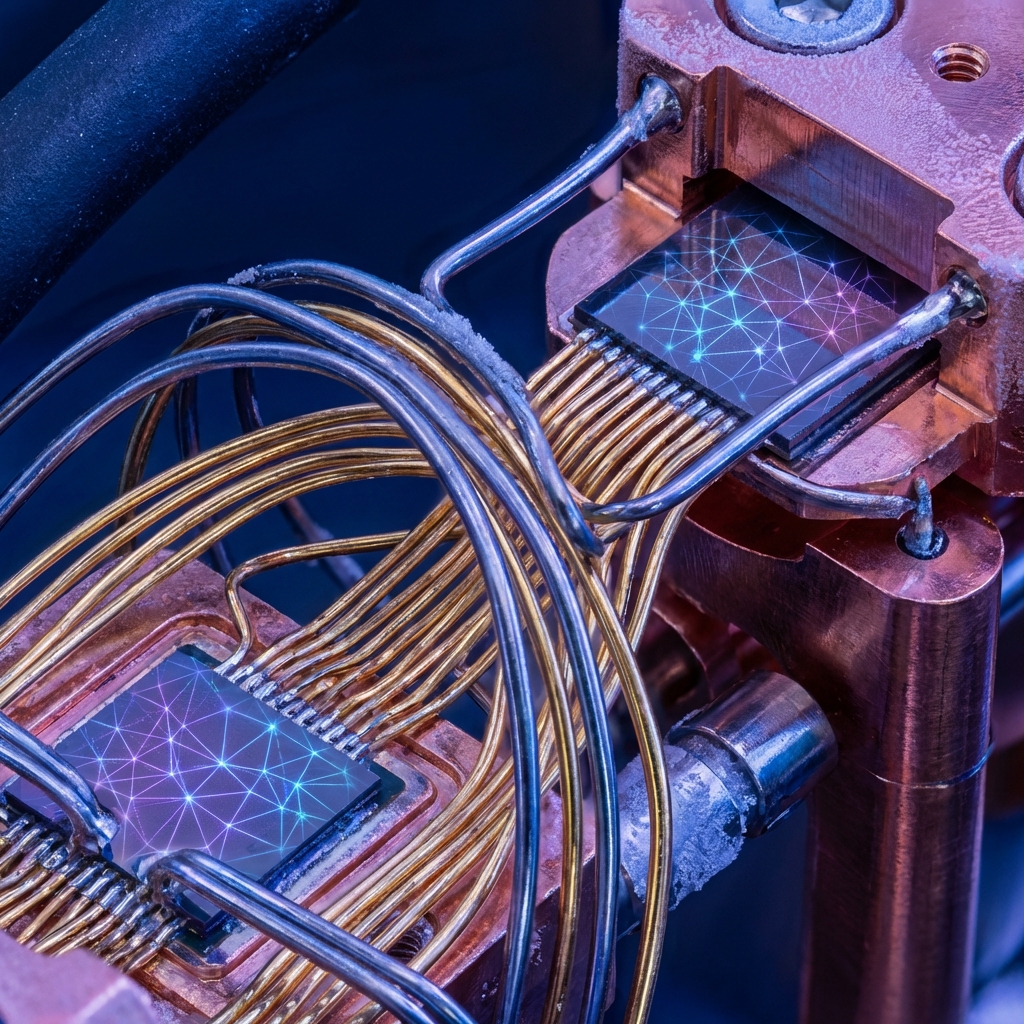

In 2026, the narrative around Quantum Computing has shifted from “Supremacy” (doing what classical computers can’t) to “Utility” (doing specific tasks more efficiently). We are still years away from fault-tolerant, universal quantum computers. However, the convergence of Quantum Machine Learning (QML) and AI has created a sweet spot for Hybrid Architectures. By treating Quantum Processing Units (QPUs) as specialized accelerators—similar to how GPUs accelerated Deep Learning in 2012—companies like Goldman Sachs and BASF are now running production pilots for portfolio optimization and molecular simulation[1]. This analysis examines the technical reality of IonQ and Xanadu’s 2026 offerings and the economic roadmap to 2030.

2) The Hybrid Workflow: CPU + GPU + QPU

The breakthrough of 2026 isn’t a better qubit; it’s better Orchestration.

Real-world applications don’t run entirely on a quantum computer. That would be efficient. Instead, they use a Variational Quantum Eigensolver (VQE) pattern:

- Classical CPU: Defines the problem parameters (e.g., “Minimize risk for this portfolio”).

- Quantum QPU: Prepares a quantum state representing a candidate solution.

- Classical GPU: Measures the output and provides feedback (“Good, but try rotating Qubit 3”).

- Loop: The system iterates until convergence.

This hybrid approach allows us to get value from “Noisy Intermediate-Scale Quantum” (NISQ) devices today, tolerating errors that would otherwise ruin a pure quantum calculation.

3) Hardware Landscape: Three Approaches

The market has consolidated around three distinct physical implementations, each with a specific “Quantum Volume” and error profile.

| Feature | IonQ (Trapped Ion) | Xanadu (Photonic) | IBM (Superconducting) |

|---|---|---|---|

| Qubit Count (2026) | 64 Algorithmic Qubits | 216 Logical Qubits | 4,000+ Physical Qubits |

| Coherence Time | Long (Seconds) | Short (Nanoseconds) | Medium (Microseconds) |

| Connectivity | All-to-All | Networked | Nearest Neighbor |

| Operation Temp | Room Temp | Room Temp (mostly) | Near Absolute Zero |

| Best For… | Optimization, Finance | Networking, ML | Materials Science |

Technical Insight: IonQ’s “All-to-All” connectivity means any qubit can talk to any other without “swapping” operations. This reduces the gate count for complex optimization problems, making it the preferred backend for financial QML in 2026.

4) Use Case: Financial Optimization

The “Traveling Salesman Problem” is hard. The “Dynamic Portfolio Optimization” problem is harder.

- The Challenge: Selecting the best combination of 500 assets to maximize return vs. risk involves $2^{500}$ possibilities. Classical Monte Carlo simulations take hours.

- The Quantum Edge: A hybrid QML algorithm (QAOA) can sample this solution space quadratically faster.

- 2026 Reality: Banks are using this not to “solve” the market, but to re-balance portfolios intra-day rather than overnight. The speed advantage allows for real-time risk mitigation during volatility[2].

5) The Timeline: To Fault Tolerance

We are currently in the “Utility Era.” The “Fault Tolerant Era” (where error correction allows for million-qubit operations) remains the holy grail.

- 2026 (Now): Quantum Utility. Hybrid workflows provide speedups for specific Niche optimization problems.

- 2027-2028: Logical Qubit Break-even. We will finally see a “Logical Qubit” (made of many physical qubits) that lasts longer than its constituent parts. This is the “Transistor Moment.”

- 2030+: Fault Tolerance. Breaking RSA encryption and simulating caffeine molecules perfectly.

6) Major Challenges

- Error Rates: NISQ devices are noisy. You have to run the same circuit 1,000 times to get a statistical distribution of the answer. This overhead eats into the speedup.

- Data Loading: The “QRAM” (Quantum RAM) bottleneck. It takes a long time to load classical data (like stock prices) into a quantum state. This is why “Big Data” problems are actually bad for quantum right now; “Small Data, Hard Calc” problems are better.

- Talent Gap: There are fewer than 5,000 qualified Quantum Algorithm Engineers globally.

7) Key Takeaways

- Don’t wait for perfection. If you wait for a fault-tolerant computer, you will miss the IP generation phase happening now.

- Focus on Optimization. If your problem involves “finding the best X out of Y possibilities” (combinatorial optimization), look at quantum.

- Hybrid is the architecture. Stop looking for a “Quantum App.” Look for a “Quantum-Accelerated Module” in your existing AI pipeline.

[1] IBM, “The State of Quantum Utility 2026,” Jan 2026.

[2] SC Quantum, “Real-World Quantum Use Cases in Finance,” 2026.

[3] TechTarget, “9 Top AI and Machine Learning Trends,” 2025.

[4] Bernard Marr, “7 Quantum Computing Trends 2026,” Dec 2025.

Related Articles

Multi-Agent Orchestration Patterns: Architecting the "Swarm" Enterprise

Architecture guide for coordinating autonomous agent swarms. We cover the Master-Agent delegation pattern, the Model Context Protocol (MCP), and how to debug non-deterministic distributed systems.

Edge AI Deployment Strategies 2026: Bringing Frontier Models to Issues

A technical guide to deploying LLMs on smartphones and IoT devices. We cover model distillation, 4-bit quantization, and the Apple Neural Engine vs Snapdragon NPU landscape.

AI Cybersecurity Evolution 2026: The Era of Autonomous Defense

Analyzing the shift from reactive security to autonomous threat detection. We examine the 82:1 AI agent-to-human ratio, the rise of "Deepfake Identity Attacks," and the zero-trust architectures required to survive.