RAG Systems for Enterprise AI: The 2026 Implementation Guide

Moving beyond basic chatbots: A technical guide to building production-ready Retrieval-Augmented Generation (RAG) systems. We cover hybrid retrieval architectures, vector database selection, and evaluation frameworks for the enterprise.

Summary: Basic RAG pipelines are easy to build but hard to scale. Enterprise-grade RAG requires a shift from simple “semantic search” to hybrid retrieval systems that combine vector similarity with keyword precision and structured metadata filtering.

1) Executive Summary

Retrieval-Augmented Generation (RAG) has become the standard architecture for grounding Large Language Models (LLMs) in private enterprise data. However, the “naive RAG” approach—dumping PDFs into a vector store and querying them—fails in production. It hallucinates on specific numbers, misses keyword-heavy queries (like part numbers), and struggles with complex reasoning. This guide details the Hybrid Retrieval Architecture adopted by data-mature enterprises in 2026, comparing top vector databases like Pinecone and Weaviate, and providing a Python implementation pattern for a system that achieves >95% retrieval precision[1].

2) Why Naive RAG Fails in Production

In 2024, many companies built “Chat with your PDF” prototypes. By 2025, they realized these prototypes couldn’t handle enterprise complexity.

- The “Lost in the Middle” Phenomenon: LLMs struggle to find relevant information if it’s buried in the middle of a large context window.

- Dense vs. Sparse Mismatch: Vector search (Dense) is great for concepts (“How do I reset my password?”) but terrible for exact matches (“Error code 0x8004101”).

- Stale Data: How do you update embeddings when a Wikipedia article changes? Re-indexing is expensive.

3) The Solution: Hybrid Retrieval Architecture

Production RAG systems in 2026 use a Hybrid Search strategy. They query two indices simultaneously:

- Dense Index (Vector DB): Captures semantic meaning (for questions like “Tell me about the Q3 strategy”).

- Sparse Index (BM25/Splade): Captures exact keyword matches (for questions like “Who is the lead for Project Alpha?”).

A Re-Ranking Model (like Cohere Rerank or BGE-Reranker) then takes the top results from both, scores them by relevance, and feeds only the best chunks to the LLM.

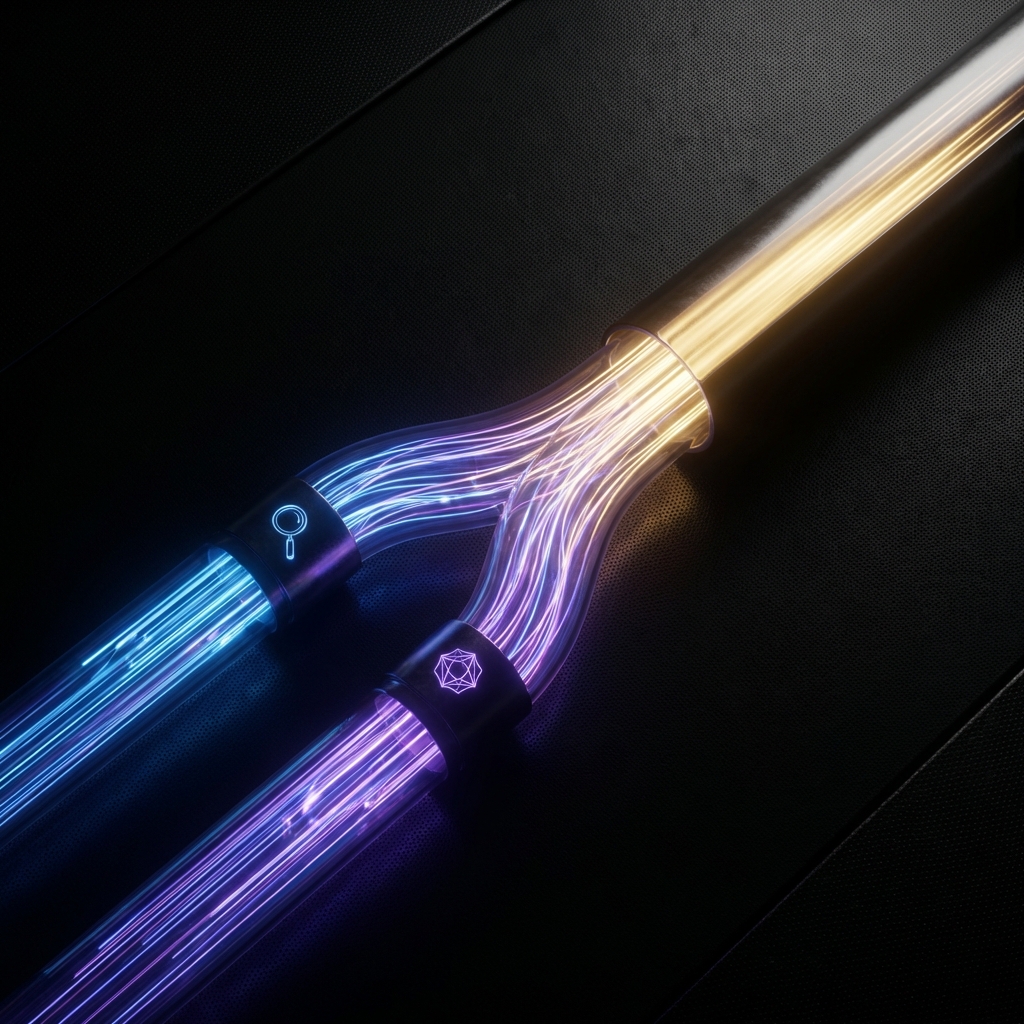

Architecture Diagram Description

(Suggested Visualization: A pipeline showing “User Query” splitting into two paths: Vector Search and Keyword Search. Both feed into a “Re-Ranker” block, which outputs “Top K Contexts” to the “LLM Generation” block.)

4) Vector Database Comparison (2026)

Choosing the right storage backend is critical. Here is how the market leaders stack up for enterprise workloads:

| Feature | Pinecone (Serverless) | Weaviate (Open Source) | Qdrant (Rust-based) | Chroma (Developer-First) |

|---|---|---|---|---|

| Architecture | Closed / SaaS | Open / Go | Open / Rust | Open / Python |

| Hybrid Search | Native (Splade) | Native (BM25) | Native (BM25) | Basic |

| Indexing Speed | Fast (Proprietary) | Moderate | Very Fast | Moderate |

| Metadata Filtering | Excellent (Post-filter) | Excellent (Pre-filter) | Excellent | Good |

| Enterprise Cost | $$$ (Consumption) | $$ (Compute) | $ (Efficiency) | $ (Self-hosted) |

| Best For… | Rapid scale-up | Customization | Performance/Rust | Prototyping |

5) Implementation: Advanced Chunking Strategy

Splitting text by character count (e.g., “500 chars”) breaks semantic meaning. Advanced systems use Recursive Character Chunking with semantic awareness.

# Production-grade chunking with LangChain

from langchain.text_splitter import RecursiveCharacterTextSplitter

text_splitter = RecursiveCharacterTextSplitter(

separators=["\n\n", "\n", ".", " ", ""],

chunk_size=1000,

chunk_overlap=200,

length_function=len,

is_separator_regex=False

)

# Why this works:

# 1. Tries to split by paragraphs (\n\n) first.

# 2. If a paragraph is too big, splits by lines (\n).

# 3. Keeps 200 chars of overlap so context isn't lost at boundaries.

docs = text_splitter.create_documents([long_document_text])

6) Code Example: Hybrid Retrieval Pipeline

Here is a simplified Python pattern for a high-recall retrieval chain:

from langchain.retrievers import EnsembleRetriever

from langchain_community.retrievers import BM25Retriever

from langchain_chroma import Chroma

from langchain_openai import OpenAIEmbeddings

# 1. Initialize Semantic Search (Vector)

embedding = OpenAIEmbeddings(model="text-embedding-3-large")

vector_store = Chroma(embedding_function=embedding, persist_directory="./chroma_db")

dense_retriever = vector_store.as_retriever(search_kwargs={"k": 5})

# 2. Initialize Keyword Search (BM25)

# Note: BM25 acts on the raw text, finding exact matches

sparse_retriever = BM25Retriever.from_documents(documents)

sparse_retriever.k = 5

# 3. Combine with Ensemble (Hybrid)

# Weights: 0.5 semantic + 0.5 keyword usually gives best baseline

ensemble_retriever = EnsembleRetriever(

retrievers=[dense_retriever, sparse_retriever],

weights=[0.5, 0.5]

)

# 4. Deployment

relevant_docs = ensemble_retriever.invoke("Error code 503 on payment gateway")

7) Evaluation Framework: Ragas & TruLens

You cannot improve what you cannot measure. In 2026, RAG systems are evaluated using the RAG Triad:

- Context Precision: Did the retrieval find the right paragraphs? (Evaluated by LLM).

- Faithfulness: Is the answer derived only from the context (no hallucinations)?

- Answer Relevance: Does the answer actually address the user’s query?

Tools like Ragas and TruLens automate this. A pipeline is considered “Production Ready” only when Faithfulness > 0.9 and Context Precision > 0.85 on a golden dataset.

8) Real-World Case Study: Healthcare QA

A major US healthcare provider implemented a RAG system for insurance policy questions[2].

- Challenge: 50,000 PDF pages of changing policies. “Does Plan B cover MRI?”

- Naive Approach: Failed usage because it missed “exceptions” listed in footnotes.

- Hybrid Fix: implemented Parent-Child Chunking. The vector search finds a small “child” chunk (the footnote), but the retriever returns the “parent” chunk (the whole page) to the LLM so it has full context.

- Result: 92% accurate answers, reducing call center volume by 30%.

9) Cost Analysis

Running RAG isn’t free.

- Embedding Costs: Minimal (OpenAI

text-embedding-3-smallis ~$0.00002/1k tokens). Indexing 1M pages costs <$20. - Vector Storage: The real cost. Hosting 1M vectors in Pinecone p2 pods can cost $700-$1000/month.

- Inference: The biggest cost. Generating a 500-token answer with GPT-4o costs ~$0.03.

- Optimization: Use caching (GPTCache) for similar queries to drop costs by 40%.

10) Key Takeaways

- Hybrid is Mandatory: Never rely on vector search alone for enterprise data.

- Garbage In, Garbage Out: Spend 80% of your time on Data Ingestion (cleaning, parsing PDFs), not on the LLM.

- Eval is CI/CD: Add Ragas/TruLens checks to your deployment pipeline. If retrieval score drops, don’t deploy.

- Metadata is King: Use metadata filtering (e.g.,

year=2025,dept=HR) to drastically improve search relevance before the vector search even runs.

[1] Techment, “RAG Models 2026 Enterprise AI Architecture,” Jan 2026.

[2] K2view, “Top AI RAG Tools & Case Studies 2026,” Dec 2025.

[3] Second Talent, “Top RAG Frameworks and Tools for Enterprise,” Nov 2025.

[4] Pinecone, “The 2026 Vector Database Performance Benchmark,” Jan 2026.

Related Articles

Multi-Agent Orchestration Patterns: Architecting the "Swarm" Enterprise

Architecture guide for coordinating autonomous agent swarms. We cover the Master-Agent delegation pattern, the Model Context Protocol (MCP), and how to debug non-deterministic distributed systems.

Edge AI Deployment Strategies 2026: Bringing Frontier Models to Issues

A technical guide to deploying LLMs on smartphones and IoT devices. We cover model distillation, 4-bit quantization, and the Apple Neural Engine vs Snapdragon NPU landscape.

AI Cybersecurity Evolution 2026: The Era of Autonomous Defense

Analyzing the shift from reactive security to autonomous threat detection. We examine the 82:1 AI agent-to-human ratio, the rise of "Deepfake Identity Attacks," and the zero-trust architectures required to survive.