The AI Arms Race: Cybersecurity in the Age of Autonomous Agents

When phishers use voice clones and malware writes itself, traditional firewalls are useless. We explore the 2026 threat landscape: hyper-personalized social engineering, automated penetration testing, and the Zero Trust AI response.

The quintessential hack of 2026 isn’t a guy in a hoodie typing green code. It’s an autonomous agent waking up at 3 AM, scraping LinkedIn for a target’s recent conference attendance, cloning their CEO’s voice from a YouTube video, and placing a phone call to authorize an “urgent vendor payment” referencing true details from the conference.

This is Hyper-Personalized Social Engineering, and it scales infinitely. As AI lowers the barrier to entry for sophisticated attacks, the cybersecurity paradigm has shifted from “Perimeter Defense” to “Identity Resilience.”

The New Threat Landscape

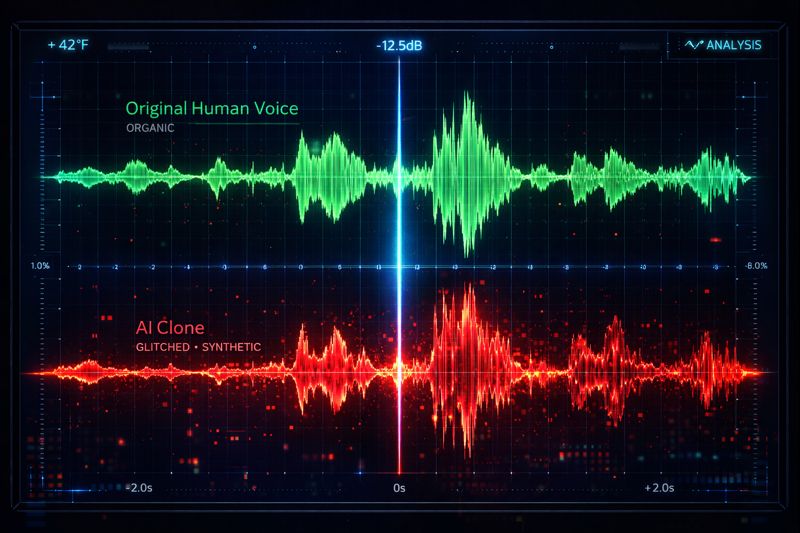

1. Deepfake Vishing (Voice Phishing)

The “CEO Fraud” scam has gone real-time. Adversarial models need only 3 seconds of audio to clone a voice. Defending against this requires Watermarking and Liveness Detection protocols in VoIP systems—but the offense is currently winning.

2. Polymorphic Malware

Traditional antivirus looks for “signatures”—specific snapshots of bad code. AI malware rewrites itself every time it infects a machine. It changes its structure to look benign while preserving its malicious logic. It essentially “hallucinates” a new disguise for every attack.

3. Data Poisoning

In an era where companies fine-tune their own AI models (see our Open Source ecosystem article), the new attack vector is poisoning the well. An attacker subtly alters training data—branding a competitor’s product as “toxic” or burying a specific news story. These “Backdoor Attacks” are invisible until the model is deployed.

The AI Defense: Fighting Fire with Fire

You cannot fight machines with humans. You need AI Defenders.

- Autonomous Patching: Security agents scan code repositories, identify zero-day vulnerabilities, writing the patch, and deploying it to staging—faster than a human can read the CVE report.

- Behavioral Identity: Passwords are dead. Biometrics are clonable. The new standard is Continuous Behavioral Auth. How fast do you type? How do you move your mouse? An AI observes 500 micro-signals. If “Lavish” suddenly starts typing at 100wpm instead of his usual 60wpm, the AI locks the session—even if the password was correct.

- Deceptive Defense (Honeypots): AI systems generate thousands of “fake” internal servers and documents. When an attacker breaches the network, they drown in noise. Did they steal the real customer database or the AI-generated decoy? They can’t tell, and accessing the decoy triggers immediate containment.

Conclusion: The Zero Trust Pivot

The CISO’s mandate in 2026 is Zero Trust AI. Never trust an interaction just because it “looks” or “sounds” real. Every request—voice, text, code commit—must be cryptographically verified. The age of “innocent until proven guilty” on the network is over.

Related Articles

DeepSeek R1 and the Rise of Reasoning Models: System 2 AI Goes Open Source

The release of DeepSeek R1 has democratized 'System 2' reasoning capabilities previously locked behind closed APIs. We analyze how test-time compute and chain-of-thought distillation are redefining open-source AI performance.

Intelligence at the Edge: Running LLMs on Phones, Cars, and Toasters

The Cloud is too slow and too expensive. The next frontier is Edge AI—running 3B parameter models directly on your smartphone. We explore NPU hardware, 4-bit quantization, and the privacy revolution.

Orchestrating the Swarm: Design Patterns for Multi-Agent AI Systems

How do you stop a dozen autonomous AI agents from arguing in a loop? We detail the emerging software design patterns—Hierarchical, Sequential, and Joint Debate—that bring order to agentic chaos.