Intelligence at the Edge: Running LLMs on Phones, Cars, and Toasters

The Cloud is too slow and too expensive. The next frontier is Edge AI—running 3B parameter models directly on your smartphone. We explore NPU hardware, 4-bit quantization, and the privacy revolution.

For a decade, “AI” meant “send data to a massive server farm in Ashburn, Virginia, wait for processing, and get the answer back.” In 2026, the pendulum swings back. Edge AI serves the model where the data lives—on the device in your pocket, the camera on the street light, or the ECU in your car.

Why move intelligence to the edge?

- Latency: A self-driving car can’t wait 200ms for a cloud server to tell it that fits a pedestrian.

- Privacy: A smart speaker shouldn’t stream your living room conversations to the internet.

3. Cost: Inference energy costs money. Burning battery on your phone is free for the service provider; burning GPU hours in the cloud is not.

3. Cost: Inference energy costs money. Burning battery on your phone is free for the service provider; burning GPU hours in the cloud is not.

The Enablers: How We Shrank the Brain

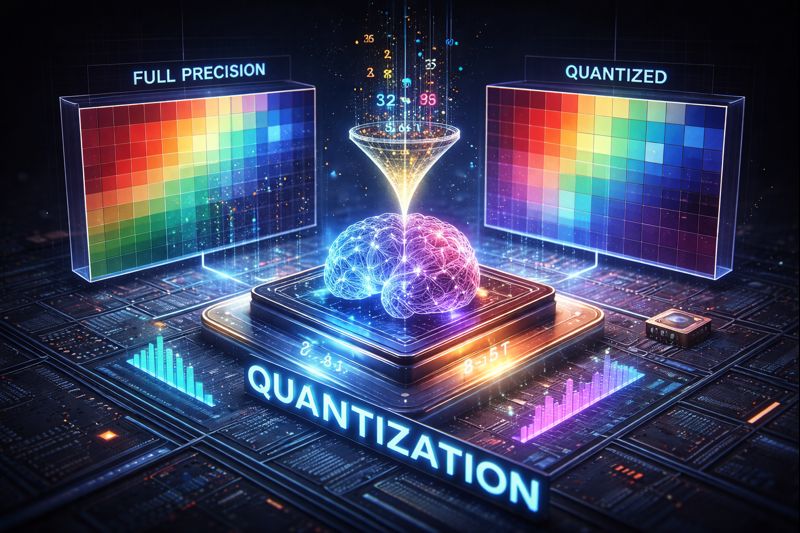

1. Quantization (The 4-bit Revolution)

We realized that AI models don’t need 32-bit floating-point precision (0.123456789). They work fine with 4-bit integers (0, 1, 2… 15). This 8x reduction in size allows a model that used to need 16GB of RAM (server class) to run in 2GB (budget Android phone) with less than 1% accuracy loss.

2. The Rise of the NPU

CPUs are for logic. GPUs are for graphics. NPUs (Neural Processing Units) are for matrices. Every flagship chip in 2026 (Apple M5, Snapdragon 8 Gen 5) dedicates 30% of silicon real estate to the NPU. These chips are optimized for “inference per watt”—delivering AI tokens without killing your battery.

3. Small Language Models (SLMs)

Microsoft’s Phi and Google’s Gemma showed that a high-quality 3B parameter model, trained on “textbook quality” data, can outperform a 70B model trained on internet junk. Quality > Quantity.

Use Cases Live Today

- Offline Translation: Real-time voice translation on your phone without a signal (Travel).

- Predictive Maintenance: A vibration sensor on a factory motor running a tiny model to detect bearing failure milliseconds before it happens (Industrial).

- Smart Cockpits: Your car’s voice assistant controls windows, navigates, and chats about history—all locally (Automotive).

The cloud isn’t going away, but it’s becoming the “training ground.” The “playing field” is the Edge.

Related Articles

The AI Arms Race: Cybersecurity in the Age of Autonomous Agents

When phishers use voice clones and malware writes itself, traditional firewalls are useless. We explore the 2026 threat landscape: hyper-personalized social engineering, automated penetration testing, and the Zero Trust AI response.

DeepSeek R1 and the Rise of Reasoning Models: System 2 AI Goes Open Source

The release of DeepSeek R1 has democratized 'System 2' reasoning capabilities previously locked behind closed APIs. We analyze how test-time compute and chain-of-thought distillation are redefining open-source AI performance.

Orchestrating the Swarm: Design Patterns for Multi-Agent AI Systems

How do you stop a dozen autonomous AI agents from arguing in a loop? We detail the emerging software design patterns—Hierarchical, Sequential, and Joint Debate—that bring order to agentic chaos.