Project GR00T: Inside NVIDIA's Operating System for Physical AI

Generative AI is moving from digital text to the physical world. We explore NVIDIA's Project GR00T, Isaac Sim, and the Jetson Thor computer—the 'three-body problem' solution powering the next generation of humanoid robots.

While OpenAI and Google battle for supremacy in digital intelligence (text, code, images), NVIDIA has quietly cornered the market on “Physical AI”—intelligence that understands the laws of physics, moves objects, and navigates chaos. The centerpiece of this strategy is Project GR00T (Generalist Robot 00 Technology), a foundation model designed not to write poetry, but to control humanoid bodies.

Unveiled in 2024 and reaching maturity in 2026, the NVIDIA Robotics ecosystem solves the “Moravec’s Paradox”: high-level reasoning is easy for AI (playing chess), but low-level sensorimotor skills are hard (folding laundry). Here is the technical breakdown of the platform powering robots from Agility, Boston Dynamics, and Figure.

The Three Pillars of Physical AI

NVIDIA’s strategy isn’t just a chip; it’s a closed-loop trinity of Simulation, Compute, and Training.

1. The Brain: Project GR00T Foundation Model

GR00T is a multimodal model that takes instructions in natural language (“Please clean up that spilled coffee”) and translates them into motor torques.

- Input: Multimodal (Audio commands, Vision from cameras, Proprioception from joint sensors).

- Output: Low-level actuator control policies (joint angles, velocity).

- Key Innovation: It is “embodiment agnostic.” The same GR00T model can be fine-tuned to drive a wheeled robot, a quadruped, or a bipedal humanoid, learning the kinematics of each body type.

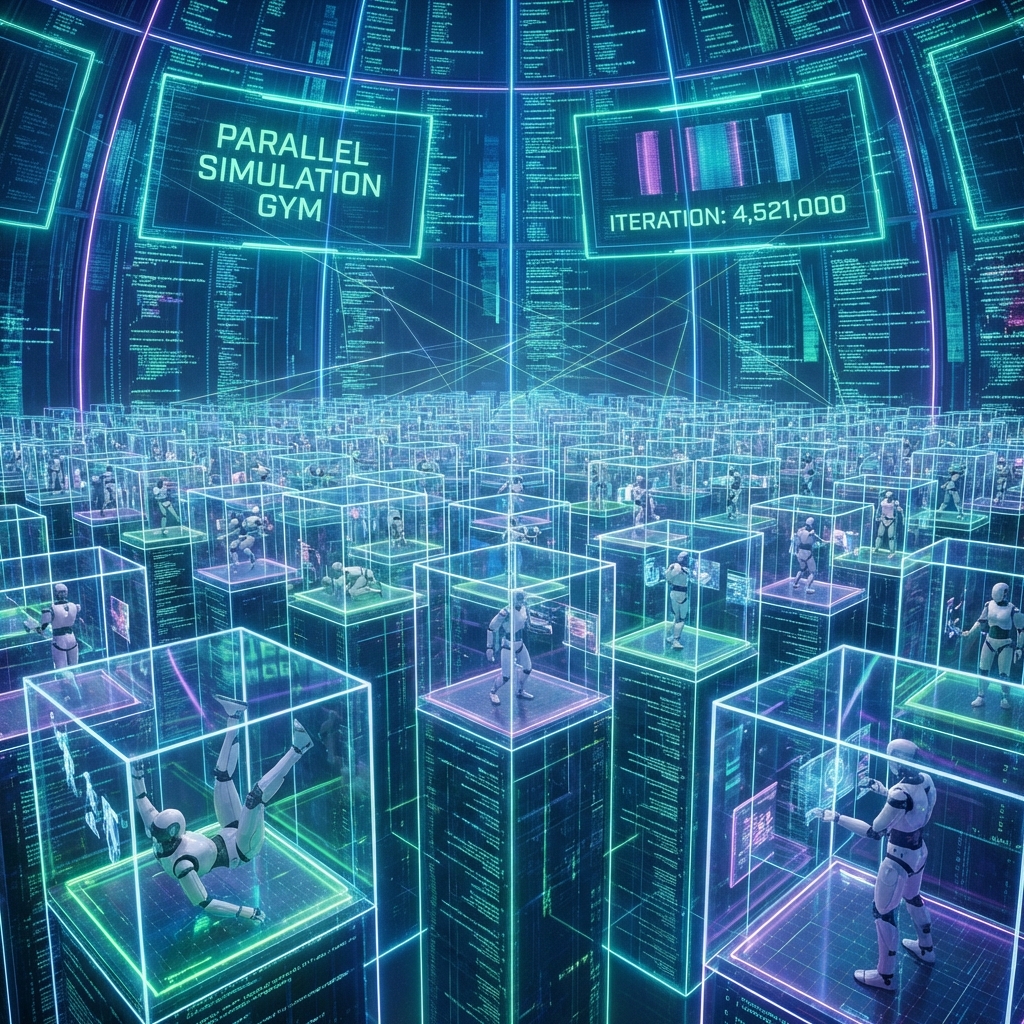

2. The Gym: Isaac Sim & Omniverse

Robots cannot learn in the real world—it is too slow and too dangerous. If a robot falls 10,000 times learning to walk, it breaks. Isaac Sim allows robots to learn in a perfect digital twin.

- Parallel Training: Train 10,000 robot instances simultaneously in the cloud. 1 hour of real-world time = 10 years of simulated experience.

- Domain Randomization: The sim changes friction, lighting, and object mass randomly. This prevents “overfitting” and ensures the robot can handle slippery floors or heavy boxes in reality.

- OSMO: A cloud orchestration service that manages this massive data workflow between DGX Cloud (training) and OVX (simulation).

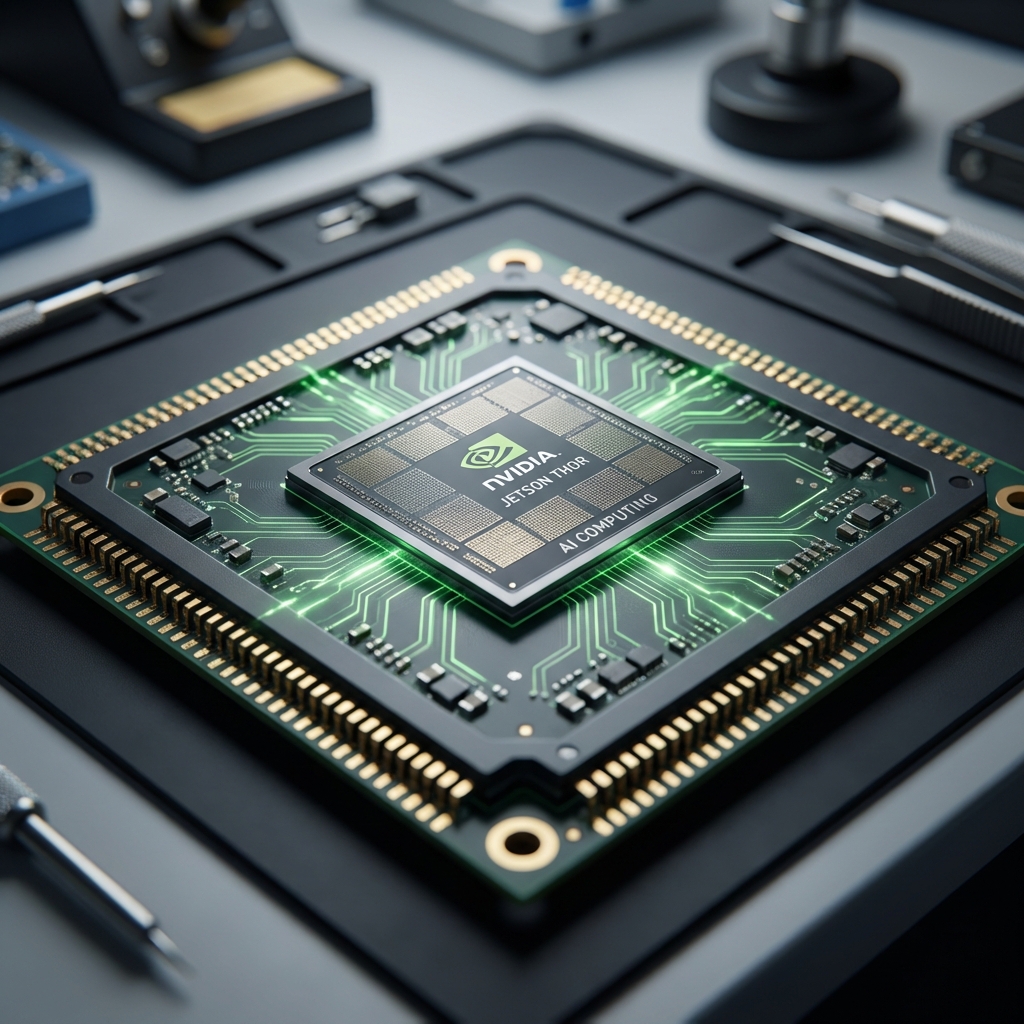

3. The Body: Jetson Thor

You can’t run a 100B parameter model on a distant server when a robot is tripping over a cable. Latency must be near-zero. Jetson Thor is the edge computer located inside the robot’s chest.

- Specs: Blackwell architecture GPU delivering 800 TeraFLOPS of AI performance.

- Function: Runs the GR00T model locally. It processes 360-degree camera feeds and makes 100 decisions per second without needing WiFi.

Why “Sim-to-Real” is the Holy Grail

The hardest problem in robotics is the Sim-to-Real Gap. A robot might walk perfectly in a simulation where physics is idealized but fall instantly in reality due to carpet texture or gear backlash.

NVIDIA closed this gap using PhysX 5, a high-fidelity physics engine that simulates:

- Soft-body dynamics: How a rubber tire deforms under load.

- Fluid dynamics: How liquid sloshes in a cup the robot is carrying.

- Particle physics: How gravel shifts under a robot’s foot.

By making the simulation indistinguishable from reality, robots trained in Isaac Sim can deploy to the real world with “zero-shot transfer”—working immediately without retraining.

The Ecosystem Play

NVIDIA isn’t building the robot hardware (mostly). They are building the “Android for Robots.”

- Partners: 1X, Agility Robotics, Apptronik, Boston Dynamics, Figure AI, Fourier, Sanctuary AI.

- Strategy: Let partners fight the hardware wars (batteries, hydraulics, gears) while NVIDIA taxes every unit via the Jetson Thor chip and Isaac software licensing.

Future Outlook: 2026 and Beyond

The next phase is “Fleet Learning.” Every robot running on the GR00T platform will upload its experiences to the cloud. If a robot in Tokyo learns to open a new type of door handle, that skill is aggregated, trained into the base model, and pushed out to every other robot globally via an over-the-air update the next morning.

This network effect suggests that the first platform to reach critical scale (most robots collecting data) will become the dominant OS for physical AI. Right now, thanks to Project GR00T, that winner looks like NVIDIA.

Key Takeaways

- Physical AI ≠ Generative AI: Requires zero latency and physics compliance.

- Simulation is key: Real-world data collection is too slow; the winner is who simulates best.

- Edge Compute matters: Intelligence must be onboard (Jetson Thor), not just in the cloud.

Related Articles

The AI Arms Race: Cybersecurity in the Age of Autonomous Agents

When phishers use voice clones and malware writes itself, traditional firewalls are useless. We explore the 2026 threat landscape: hyper-personalized social engineering, automated penetration testing, and the Zero Trust AI response.

DeepSeek R1 and the Rise of Reasoning Models: System 2 AI Goes Open Source

The release of DeepSeek R1 has democratized 'System 2' reasoning capabilities previously locked behind closed APIs. We analyze how test-time compute and chain-of-thought distillation are redefining open-source AI performance.

Intelligence at the Edge: Running LLMs on Phones, Cars, and Toasters

The Cloud is too slow and too expensive. The next frontier is Edge AI—running 3B parameter models directly on your smartphone. We explore NPU hardware, 4-bit quantization, and the privacy revolution.