The Quantum-AI Convergence: How Hybrid Computing is Solving Unsolvable Problems

Classical supercomputers hit a wall with problems like protein folding and battery chemistry. Discover how 2026's hybrid Quantum-AI architectures are breaking these barriers to design new materials in days, not decades.

For decades, Quantum Computing and Artificial Intelligence were parallel tracks of science fiction. In 2026, they have collided. The era of “Quantum Utility” has arrived, not through the mythical fault-tolerant universal quantum computer, but through Hybrid Quantum-AI, where classical GPU clusters running generative AI models work in tandem with noisy intermediate-scale quantum (NISQ) processors.

This convergence is not about faster chat bots. It is about simulating the physical world at the atomic level—solving the “many-body problem” that baffles even the largest supercomputers. From designing solid-state battery electrolytes to discovering non-toxic crop protection, Quantum-AI is compressing R&D timelines from decades to months.

The Problem: The Exponential Wall

Classical computers, no matter how powerful, struggle with nature. To simulate a caffeine molecule perfectly, you need more bits than there are atoms in the universe. This is because every electron interacts with every other electron—an exponential explosion of complexity known as the “curse of dimensionality.”

AI models (Deep Learning) can approximate these systems, but they are “black boxes”—they predict outcomes based on data patterns, not physical laws. They hallucinate new materials that are chemically unstable.

Quantum computers are nature’s native simulators. They use qubits (quantum bits) that exist in superposition, allowing them to map directly onto the electron states of a molecule. But they are noisy, error-prone, and have limited coherence times.

The Solution: The Hybrid Loop

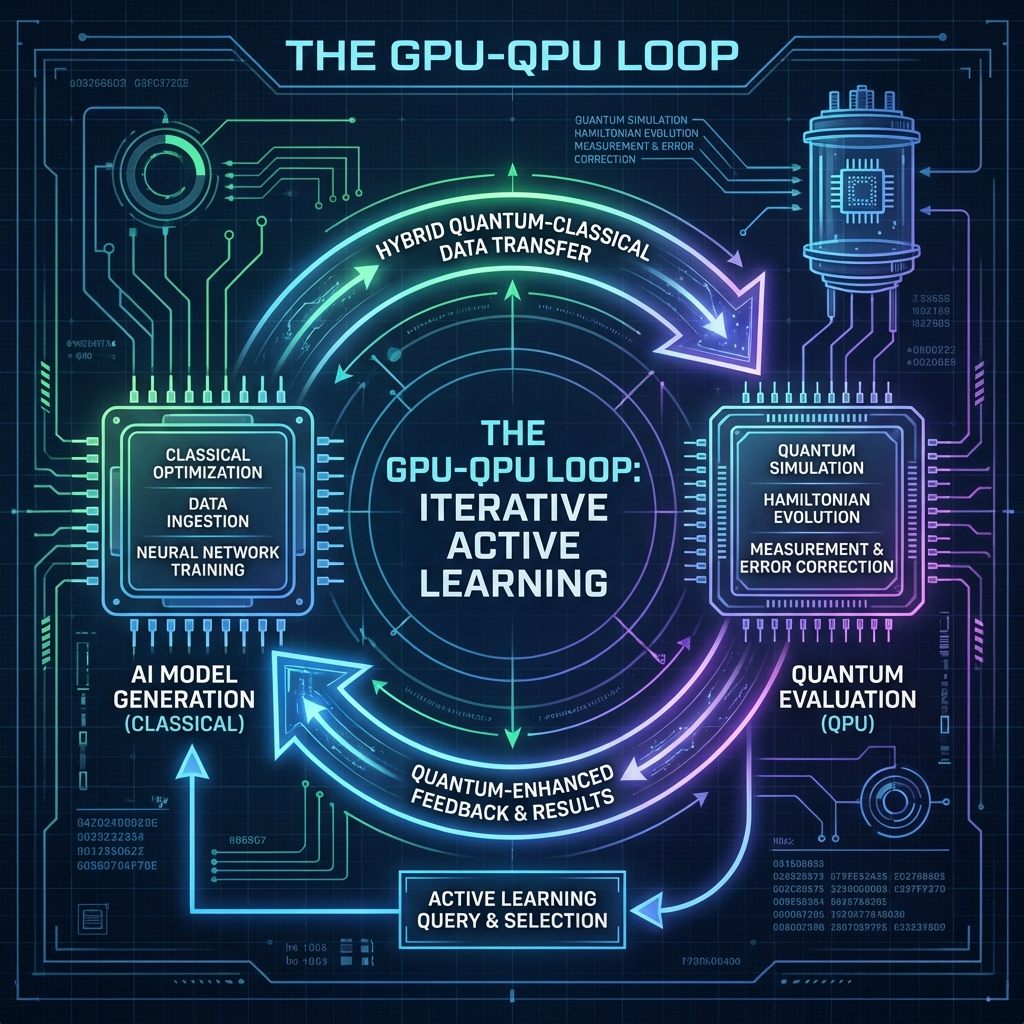

The breakthrough of 2026 is the GPU-QPU Loop. It works like this:

- AI Proposes (Classical GPU): A generative AI model (trained on physics data) hallucinates 1,000 potential candidates for a new battery cathode.

- Quantum Evaluates (QPU): The most promising candidates are mapped onto a Quantum Processor (e.g., IBM Heron or QuEra neutral atoms). The QPU calculates the specific ground-state energy of the electrons—the “truth” of whether the material is stable. 3.* Phase 2: The QPU simulates the molecule’s quantum state (Hamiltonian evolution) to determine its actual properties—a task impossible for classical logic.

- Loop: The results feed back into the AI to improve the next guess.

This active learning cycle reduces the number of quantum measurements needed by 99%, making practical chemistry possible on imperfect quantum hardware.

This active learning cycle reduces the number of quantum measurements needed by 99%, making practical chemistry possible on imperfect quantum hardware.

Real-World Breakthroughs

Material Science: The “Forever Battery”

Global automotive giants are using this hybrid stack to hunt for the holy grail: Solid-State Electrolytes.

- Challenge: Finding a material that conducts lithium ions fast (like a liquid) but doesn’t catch fire (like a solid). There are $10^{100}$ possible combinations.

- Quantum-AI Approach: An AI model narrowed the space to 10,000 families. A quantum annealer solved the optimization problem of ion transport pathways.

- Result: Identified a new solid-state electrolyte candidate (N2116) in 80 hours (vs. months).

Pharma: Protein Folding Beyond AlphaFold

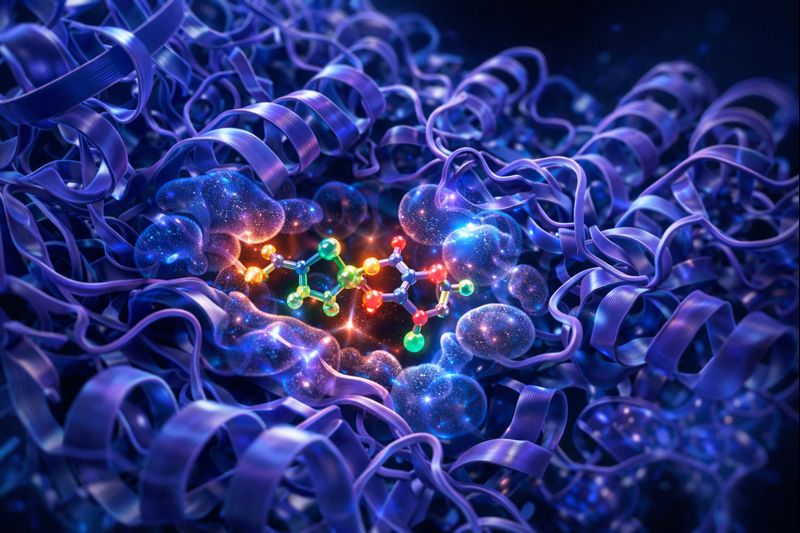

While AlphaFold predicted protein capability structures, it struggled with ligand binding—how a drug molecule actually attaches to a dynamic, moving protein.

- The Quantum Edge: Quantum simulation can model the electron cloud density at the binding site.

- Impact: TechBio startups are now designing small-molecule drugs that bind to “undruggable” targets (like the KRAS cancer mutation) with higher affinity than ever predicted by classical physics engines.

The Vendor Landscape

- NVIDIA & CUDA-Q: NVIDIA has positioned itself as the orchestrator. Their CUDA-Q platform allows developers to write code that runs partly on H100 GPUs and partly on quantum backends (IonQ, Quantinuum) without needing a PhD in quantum physics.

- IBM Quantum: With their 100,000+ qubit roadmap, IBM is focusing on “error mitigation”—using AI to de-noise the output of their quantum chips, effectively turning bad qubits into good data.

- Google Sandbox AQ: Focusing strictly on the software layer, building “Post-Quantum Cryptography” (PQC) to protect enterprises from the day quantum computers break reliable encryption (predicted ~2029).

Challenges Remaining

- Qubit Stability: We still need more stable qubits (logical qubits) to run long algorithms. Current systems lose coherence in microseconds.

- Data Bandwidth: The bottleneck is often moving data between the classical supercomputer and the quantum cryostat.

- Talent Gap: There are fewer than 5,000 people globally who understand both Transformer architectures and Quantum Hamiltonian operators.

Conclusion

We are witnessing the end of “Trial and Error” science. For 200 years, chemistry was mixing things in a beaker to see what happened. In the last 20 years, it was simulation. In the next 10, it will be Inverse Design: telling the Quantum-AI what properties you want (transparent, conductive, flexible), and letting the hybrid system generate the molecular recipe.

For the enterprise CTO, the message is clear: You don’t need to buy a quantum computer. But you need to start gathering the data and building the AI pipelines that will feed one.

Related Articles

The AI Arms Race: Cybersecurity in the Age of Autonomous Agents

When phishers use voice clones and malware writes itself, traditional firewalls are useless. We explore the 2026 threat landscape: hyper-personalized social engineering, automated penetration testing, and the Zero Trust AI response.

DeepSeek R1 and the Rise of Reasoning Models: System 2 AI Goes Open Source

The release of DeepSeek R1 has democratized 'System 2' reasoning capabilities previously locked behind closed APIs. We analyze how test-time compute and chain-of-thought distillation are redefining open-source AI performance.

Intelligence at the Edge: Running LLMs on Phones, Cars, and Toasters

The Cloud is too slow and too expensive. The next frontier is Edge AI—running 3B parameter models directly on your smartphone. We explore NPU hardware, 4-bit quantization, and the privacy revolution.